We have seen in yesterday's article what is a perceptron in Javascript.

My plan for today's post is to build the most basic neuronal network in Javascript. We will do this with pure old vanilla Javascript and without no other libraries such as TensorflowJs.

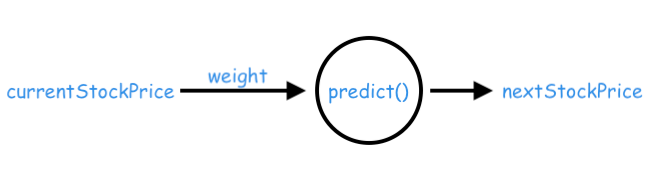

Our perceptron will take as an input today's price of a stock share, and based on this it will try to predict what the price will be tomorrow.

So, we will have:

- a one-neuron network

- one input (the current price of the stock)

- one output (the predicted stock price)

Keep in mind that this example is super simplified and cannot be used in a real application. Its scope is to give a very basic level overview of how Javascript neuronal networks are working under the hood.

So, let's get to work!

Setting up the Javascript neuronal network

Most of your work (as an ML person) will be related to how well the single predict function performs:

const predict = (data, weight) => data * weightThis is the core of our model!

But what is the weight parameter? Without good weights, you cannot find how to estimate a function. In our case is the stock price evolution function. Actually, a very big chunk of Machine Leaning work is to figure out what are these weights.

We will see in tomorrow's post why we need to start the network's weights from small random values. For now, we will just begin with a default value of 1.01.

Below is the starting Javascript code for our example:

const realPriceNextDay = 225

const currentStockPrice = 220

let weight=1.01

const predict = (data, weight) => data * weightUsing the untrained network

Our scope is to minimize the error between the generated predictions of our model given by the predict() function and the realPriceNextDay.

In order to measure this error we will use a mean squared error Javascript function. I encourage you to read this article about why we use a squared error instead of just doing a simple subtraction.

We will first test our model to see how far it is from making a good prediction:

const realPriceNextDay = 225

const currentStockPrice = 220

let weight=1.01

const meanSquaredError = (prediction, real)=> {

return (prediction - real) ** 2

}

const predict = (data, weight) => data * weight

const makePrediction = (msg) => {

console.group(msg)

let prediction = predict(currentStockPrice, weight)

let error = meanSquaredError(prediction, realPriceNextDay)

console.log(`The real stock price is ${realPriceNextDay} USD`)

console.log(`Stock price prediction is ${prediction} USD`)

console.log(`meanSquaredError = ${error}`)

console.groupEnd()

}

makePrediction('BEFORE TRAINING')

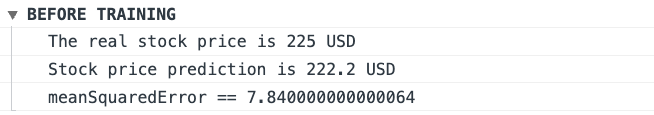

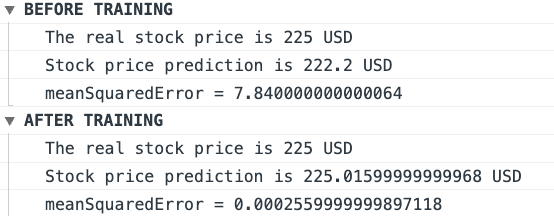

Our untrained Javascript model predicts a price of 222.2 USD, vs the real one of 225 USD and a meanSquaredError = 7.840000000000064.

Let's see if we can improve this!

Training the Javascript neuronal network

When training a Javascript neuronal network the scope is to tweak the weights so that the error gets as small as possible.

Through repeated exposures to the training data, we try to tweak the weight up and down. Our training data is super small. Just the one price value. In real applications, we will have a much bigger training data volume.

The amount by which we modify the weight will be stored in its own constant const change = 0.0001. This is also called the learning rate.

When our model has seen the training data once it means an epoch was completed.

Below is the code for our training function:

const change = 0.0001

const adjustWeight = (currentWeight, errorUp, errorDown) => {

if (errorUp < errorDown) {

return currentWeight + change

}

return currentWeight - change

}

const trainModel = (target, epochs) => {

for(let i = 0; i < epochs; i++) {

const guessUp = predict(currentStockPrice, weight + change)

const guessDown = predict(currentStockPrice, weight - change)

const errorUp = meanSquaredError(guessUp, target)

const errorDown = meanSquaredError(guessDown, target)

weight = adjustWeight(weight, errorUp, errorDown)

}

}What we try here to do is to move the weight up and down and keep the result that minimizes the error.

Using the trained network

It's time to put our trained model to work and compare the results. We will start with 1000 epochs:

makePrediction('BEFORE TRAINING')

trainModel(realPriceNextDay, 1000)

makePrediction('AFTER TRAINING')So, we managed to improve the squared error from 7.840000000000064 to 0.0002559999999897118. Pretty good job!

You can play with different values for the epochs and the change and see if you can improve the error.

Below is the full code for our example:

const realPriceNextDay = 225

const currentStockPrice = 220

const change = 0.0001

let weight=1.01

const meanSquaredError = (prediction, real)=> {

return (prediction - real) ** 2

}

const predict = (data, weight) => data * weight

const adjustWeight = (currentWeight, errorUp, errorDown) => {

if (errorUp < errorDown) {

return currentWeight + change

}

return currentWeight - change

}

const trainModel = (target, epochs) => {

for(let i = 0; i < epochs; i++) {

const guessUp = predict(currentStockPrice, weight + change)

const guessDown = predict(currentStockPrice, weight - change)

const errorUp = meanSquaredError(guessUp, target)

const errorDown = meanSquaredError(guessDown, target)

weight = adjustWeight(weight, errorUp, errorDown)

}

}

const makePrediction = (msg) => {

console.group(msg)

let prediction = predict(currentStockPrice, weight)

let error = meanSquaredError(prediction, realPriceNextDay)

console.log(`The real stock price is ${realPriceNextDay} USD`)

console.log(`Stock price prediction is ${prediction} USD`)

console.log(`meanSquaredError = ${error}`)

console.groupEnd()

}

makePrediction('BEFORE TRAINING')

trainModel(realPriceNextDay, 1000)

makePrediction('AFTER TRAINING')You can check out here the codepen example here.

Also please remember that this is just a super simplified implementation o. Its scope is to show how the very basics of how Javascript neuronal networks work. We will see in the next articles more complex implementations.

📖 50 Javascript, React and NextJs Projects

Learn by doing with this FREE ebook! Not sure what to build? Dive in with 50 projects with project briefs and wireframes! Choose from 8 project categories and get started right away.

📖 50 Javascript, React and NextJs Projects

Learn by doing with this FREE ebook! Not sure what to build? Dive in with 50 projects with project briefs and wireframes! Choose from 8 project categories and get started right away.