The Langchain.js framework makes it easy to integrate LLMs (Large Language Models) such as OpenAi's GTP with our Javascript-based apps.

There are many things Langchain can help us with, but in this tutorial we will focus just on getting the first ReactJs and Langchain example up and running.

We will build a small ReactJs app that will search for synonyms of a given word, in 4 different languages, using ChatGPT. You can see the final demo in action in this video:

Alongside connecting to our first Langchain AI Model we will also use things such as chains, output parsers, or chat prompt templates.

If you want to skip ahead the full source of the example is on my Github. Please note that you need to create a .eng file where to set your VITE_OPENAI_KEY key. You can also checkout this article on more things we do with Langchain as Javascript developers.

Installing Langchain.js and setting up the Open AI API key

We will use a Vite ReactJs boilerplate for this example.

npm create vite@latest langchain-synonyms -- --template react

cd langchain-synonyms

npm installThe next step will be to install the Langchain.js library.

npm i langchainWe will also need an Open AI API key to use the GPT model. So, head to https://platform.openai.com/api-keys and make one key. We will store it in a .env file in the root of the langchain-synonyms folder.

// 📁 file path: .env

VITE_OPENAI_KEY = 'PUT_API_KEY_HERE'We can now start the app by running:

npm run devCreating our first Langchain.js AI model

Now that we have installed the Langchain npm module and obtained our API key we can start making our first GPT model.

For this, we will use the ChatOpenAI class from Langchain. Below is the full code that will achieve this.

// 📁 file path: src/App.jsx

import { useEffect, useRef, useState } from 'react';

import { ChatOpenAI } from "@langchain/openai";

function App() {

const model = useRef(null)

const [answer, setAnswer] = useState('')

useEffect(()=> {

model.current = new ChatOpenAI({

openAIApiKey: import.meta.env.VITE_OPENAI_KEY,

})

}, [])

const askGPT = async (word, lang) => {

const question = "Find 3 synonyms for " + word + " in the language " + lang

const response = await model.current.invoke(question)

console.log(response)

setAnswer(response.content)

}

const onSubmitHandler = ()=> askGPT('cat', 'italian')

return (

<>

<h1>🤖 GPT synonyms generator</h1>

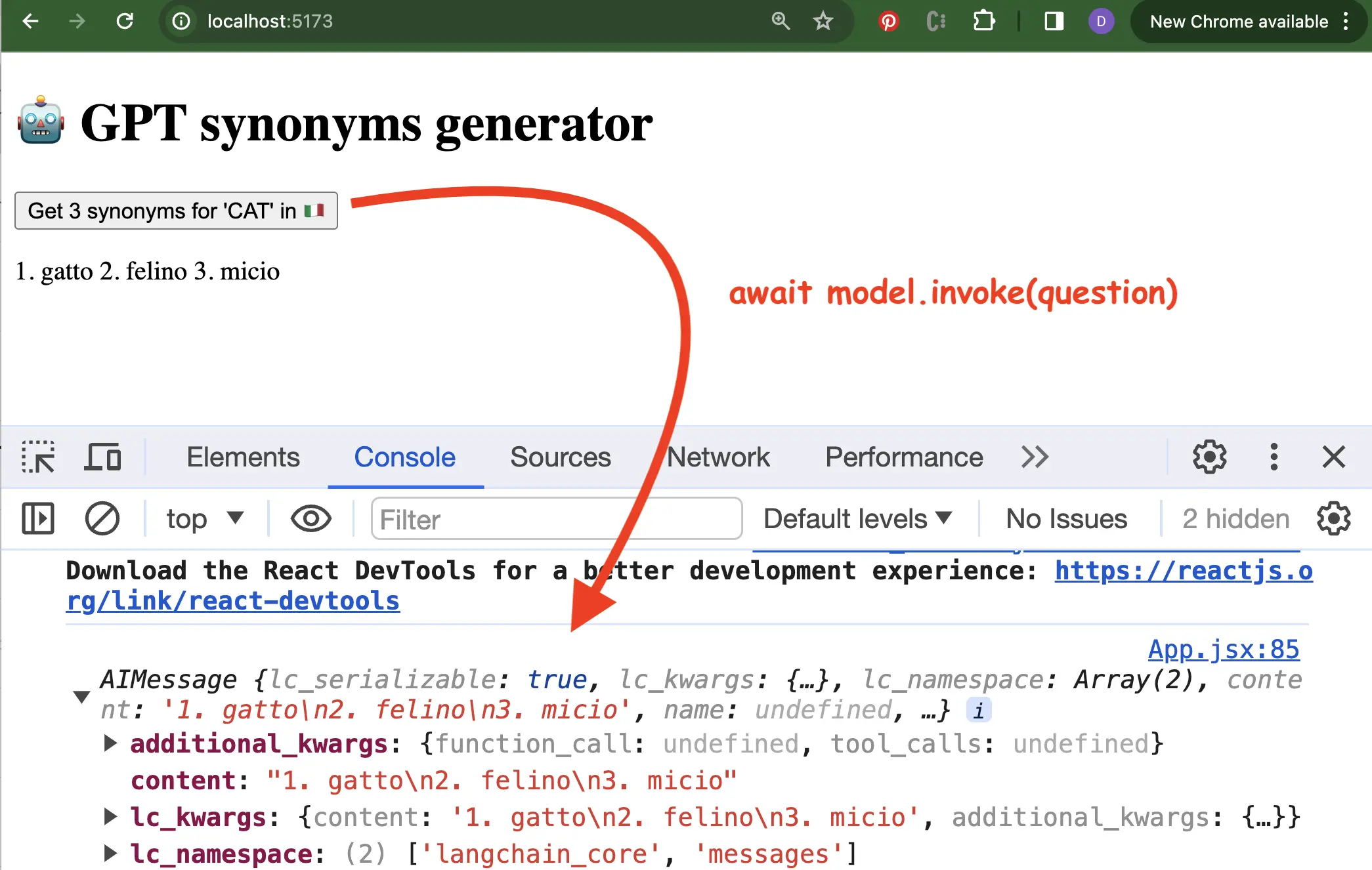

<button onClick={onSubmitHandler}>Get 3 synonyms for 'CAT' in 🇮🇹</button>

<p>{answer}</p>

</>

)

}

export default AppWe just want to create a new instance of the ChatOpenAI when the component runs, therefore the useRef() hook to keep value between renders for the model.

When we press the button, the asynchronous invoke() method of the model will give us back an instance of AIMessage. From there we can use the content property to read the received answer:

Setting up the ReactJs UI

At the moment we just send a static query to the AI model each time:

// word is 'CAT'

// lang is italian

"Find 3 synonyms for " + word + " in the language " + lang Therefore it's time to wire up the Langchain model to a ReactJs interface:

function App() {

// ...

const onSubmitHandler = (event)=> {

event.preventDefault()

const word = event.target.word.value

const lang = event.target.lang.value

askGPT(word, lang)

}

return (

<>

<h1>🤖 GPT synonyms generator</h1>

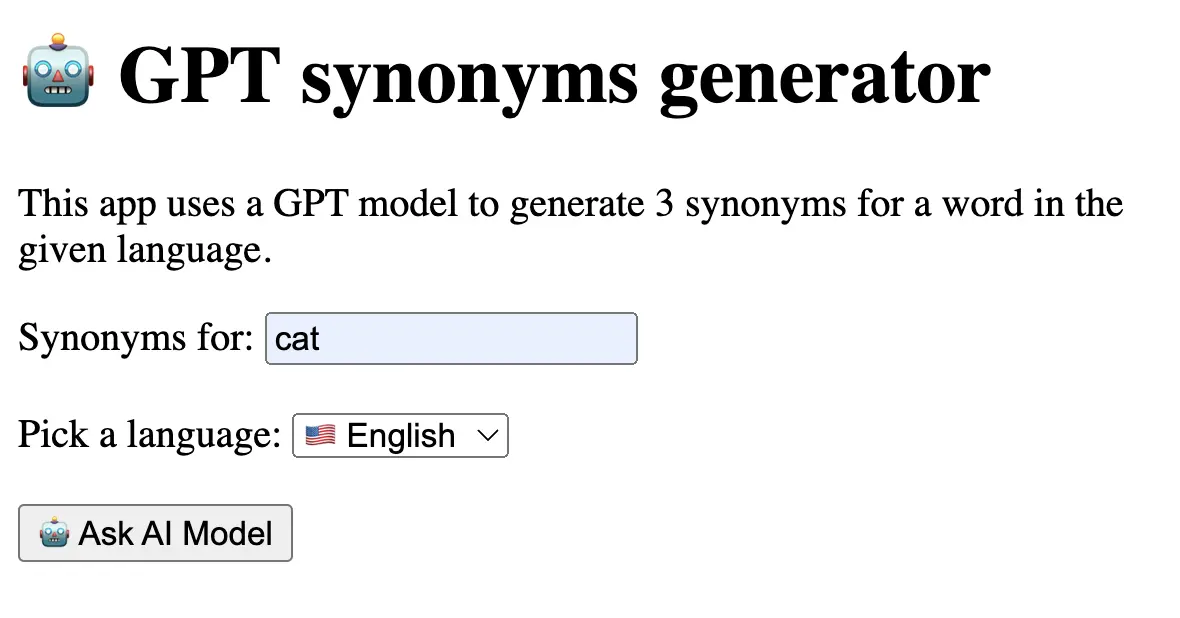

<p>This app uses a GPT model to generate 3 synonyms

for a word in the given language.</p>

<form onSubmit={onSubmitHandler}>

<label htmlFor="word">Synonyms for: </label>

<input name='word' placeholder='word' />

<label htmlFor="lang">Pick a language: </label>

<select name="lang">

<option value="english">🇺🇸 English</option>

<option value="spanish">🇪🇸 Spanish</option>

<option value="frech">🇫🇷 French</option>

<option value="italian">🇮🇹 Italian</option>

</select>

<button>🤖 Ask AI Model</button>

<p>{answer}</p>

</form>

</>

)

}With this, our app will ask the same thing as before, just that now it will retrieve the word and lang parameters from the user's input:

Adding a ChatPromptTemplate and our first chain

There are a couple of improvements we can make to our small ReactJsReactJs app.

First, let's take a look at the query we are sending to the invoke() function. Right now it looks like so:

const question = "Find 3 synonyms for " + word + " in the language " + lang

const response = await model.current.invoke(question)While we can slightly improve the question by using ES6 string template literals, a much better way is to use the ChatPromptTemplate() class from Langchain.js:

import { ChatPromptTemplate } from "@langchain/core/prompts"

// ...

const askGPT = async (word, lang) => {

const prompt = ChatPromptTemplate.fromMessages([

[

"system",

"Give 3 synonyms, for {word} in the {language} separated by comma (eg: `abc, def, ghi`)",

],

["human", "{word}", "{language}"],

])

const chain = prompt.pipe(model.current)

const response = await chain.invoke({ word: word, language: lang })

console.log(response)

setData(response)

}Later we can store and use these types of messages to make a chat context for the model.

Not only that we add a parametrized ChatPromptTemplate(), but now the calling of the model is made via a chain, created via the pipe() method.

In a chain, the input of a pipe serves as the input for the next pipe. Remember the old jQuery days:

$('#app')

.fadeOut(100)

.fadeIn(100)

.remove()As we will see next, a cool thing about chains is that we can link multiple manipulations, add output parsers, or even add our custom methods so that we can interact with other models, manipulate data, and more.

Using Langchain's CommaSeparatedListOutputParser

Note in the previous step I've also given the model a better prompt to explain how I want the result to be returned. The part with separated by comma (eg: abc, def, ghi).

For example, if I ask it now to give me 3 English synonyms for the word "lion" it will send the following output:

"king of the jungle, big cat, feline predator"Langchain.js also comes with a set of tools named output parsers. In essence, the output parsers are string manipulation tools that can make our lives much easier.

For this example, we can use the CommaSeparatedListOutputParser() so that we get the answer directly as an array:

import { CommaSeparatedListOutputParser } from "@langchain/core/output_parsers"

// ...

const askGPT = async (word, lang) => {

// ...

const parser = new CommaSeparatedListOutputParser()

const chain = prompt.pipe(model.current).pipe(parser)

const response = await chain.invoke({ word: word, language: lang})

// we now get directly the data and can remove the response.content

console.log(response)

setAnswer(response)

} With this we can now just map throughout the elements and render them in a list:

const [answer, setAnswer] = useState([])

// ...

<ul>

{answer.map((item,i) => <li key={i}>{item}</li>)}

</ul>Conclusion

With this, we have made our first integration example of an LLM in a ReactJs app using Langchain. The full code of the example is on my GitHub. Remember to create a .eng file where to set your VITE_OPENAI_KEY key. You can start the app with npm run dev.

If you have any questions, don't hesitate to leave a comment below.

Be smart, be kind, and keep coding!

📖 50 Javascript, React and NextJs Projects

Learn by doing with this FREE ebook! Not sure what to build? Dive in with 50 projects with project briefs and wireframes! Choose from 8 project categories and get started right away.

📖 50 Javascript, React and NextJs Projects

Learn by doing with this FREE ebook! Not sure what to build? Dive in with 50 projects with project briefs and wireframes! Choose from 8 project categories and get started right away.